Test Personnel and Abstraction

These four tasks specialize in designing, implementing, and running the tests. Of course, they are doing not cover all aspects of testing. This categorization omits important tasks like test management, maintenance, and documentation, among others. We specialize in these because they’re essential to developing test values.

A challenge to using a criteria-based test design is that the amount and sort of data needed. Many organizations have a shortage of highly technical test engineers. Few universities teach test criteria to undergraduates and lots of graduate classes specialize in theory, supporting research instead of the application. However, the great news is that with a well-planned division of labor, one criteria-based test designer can support a reasonably sizable amount of test automation, executors, and evaluators.

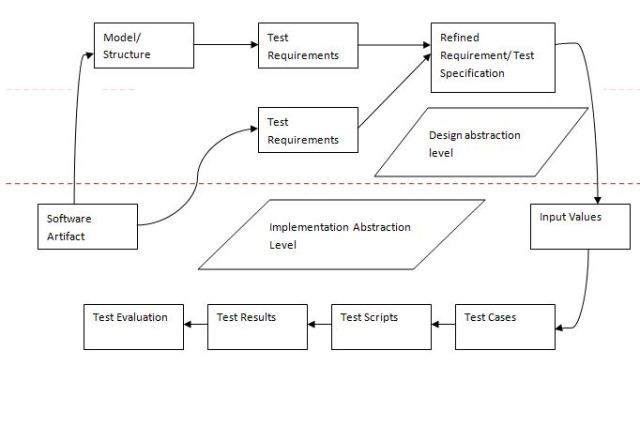

The model-driven test design process explicitly supports this division of labor. This process is illustrated in the figure, which shows test design activities above the road and other test activities below.

The MDTD lets test designers “raise their level of abstraction ” in order that a little subset of testers can do the mathematical aspects of designing and developing tests. this is often analogous to construction design, where one engineer creates a design that’s followed by many carpenters, plumbers, and electricians. the normal testers and programmers can then do their parts: finding values, automating the tests, running tests, and evaluating them. This supports the truism that “testers ain’t mathematicians.”

The start line in Figure 2.4 may be a software artifact. this might be a program source, a UML diagram, tongue requirements, or maybe a user manual. A criteria-based test designer uses that artifact to make an abstract model of the software within the sort of an input domain, a graph, logic expressions, or a syntax description. Then a coverage criterion is applied to make test requirements. A human-based test designer uses the artifact to think about likely problems within the software, then creates requirements to check for those problems. These requirements are sometimes refined into a more specific form, called the test specification. for instance, if edge coverage is getting used, a test requirement specifies which edge up a graph must be covered. A refined test specification would be an entire path through the graph.

Once the test requirements are refined, input values that satisfy the wants must be defined. This brings the method down from the planning abstraction level to the implementation abstraction level. These are analogous to the abstract and concrete tests within the model-based testing literature. The input values are augmented with other values needed to run the tests (including values to succeed in the purpose within the software being tested, to display output, and to terminate the program). The test cases are then automated into test scripts (when feasible and practical), run on the software to supply results, and results are evaluated. it’s important that results from automation and execution be wont to feedback to test design, leading to additional or modified tests.

This process has two major benefits. First, it provides a clean separation of tasks between test design, automation, execution, and evaluation. Second, raising our abstraction level makes test design much easier. Instead of designing tests for a messy implementation or complicated design model, we design at a chic mathematical level of abstraction. this is often exactly how algebra and calculus have been utilized in traditional engineering for many years.

3 thoughts on “Test Personnel and Abstraction”

Comments are closed.

Whatever design service you are looking for; be it Clipping Path, Drop Shadow etc., we understand & acknowledge that the quality of the work relates to your brand image. With that note, we provide our prime focus to excellence. So far, our previous experience with our clients depicts that our Clipping Path & many other design service have been admirable. Quality with speed – with our efficient group people, we have been successful in providing our clients’ bulk amount of images at required deadlines.

Boost sales with photos that standout,

In Clipping Path Service, the background is eliminated or cut out from an image. We provide handmade Clipping path with the use of Photoshop Pen Tool to have the finest possible output. Reliant on the degree or difficulty of the image to be cut we set the pricing.

loan bad credit

It’s going to be ending of mine day, but before ending I am reading

this impressive piece of writing to improve my knowledge.